How to Read a Txt File of Char to an Array in Java

Reading files in Java is the cause for a lot of confusion. At that place are multiple ways of accomplishing the same chore and it'southward often not clear which file reading method is all-time to employ. Something that'southward quick and dirty for a small example file might non be the best method to use when you need to read a very large file. Something that worked in an earlier Java version, might not be the preferred method anymore.

This article aims to be the definitive guide for reading files in Java 7, 8 and ix. I'm going to cover all the means you lot tin can read files in Coffee. Too often, you'll read an article that tells y'all one way to read a file, merely to discover later there are other means to do that. I'one thousand actually going to cover 15 dissimilar ways to read a file in Java. I'g going to comprehend reading files in multiple ways with the cadre Coffee libraries equally well as two third party libraries.

But that's not all – what adept is knowing how to do something in multiple means if you don't know which style is best for your situation?

I also put each of these methods to a real performance examination and document the results. That way, you volition accept some hard data to know the performance metrics of each method.

Methodology

JDK Versions

Java code samples don't live in isolation, especially when information technology comes to Coffee I/O, every bit the API keeps evolving. All lawmaking for this article has been tested on:

- Java SE vii (jdk1.vii.0_80)

- Java SE 8 (jdk1.8.0_162)

- Java SE 9 (jdk-nine.0.four)

When there is an incompatibility, it will be stated in that department. Otherwise, the code works unaltered for unlike Coffee versions. The chief incompatibility is the utilize of lambda expressions which was introduced in Java 8.

Coffee File Reading Libraries

There are multiple ways of reading from files in Coffee. This article aims to be a comprehensive collection of all the different methods. I will cover:

- java.io.FileReader.read()

- java.io.BufferedReader.readLine()

- java.io.FileInputStream.read()

- java.io.BufferedInputStream.read()

- java.nio.file.Files.readAllBytes()

- java.nio.file.Files.readAllLines()

- java.nio.file.Files.lines()

- java.util.Scanner.nextLine()

- org.apache.commons.io.FileUtils.readLines() – Apache Eatables

- com.google.mutual.io.Files.readLines() – Google Guava

Closing File Resources

Prior to JDK7, when opening a file in Coffee, all file resources would need to be manually closed using a try-catch-finally block. JDK7 introduced the try-with-resources argument, which simplifies the process of closing streams. You no longer demand to write explicit code to shut streams because the JVM volition automatically close the stream for you, whether an exception occurred or not. All examples used in this article apply the try-with-resources statement for importing, loading, parsing and closing files.

File Location

All examples will read test files from C:\temp.

Encoding

Character encoding is not explicitly saved with text files so Java makes assumptions about the encoding when reading files. Unremarkably, the assumption is correct only sometimes you want to be explicit when instructing your programs to read from files. When encoding isn't correct, yous'll see funny characters appear when reading files.

All examples for reading text files utilise two encoding variations:

Default system encoding where no encoding is specified and explicitly setting the encoding to UTF-8.

Download Code

All lawmaking files are available from Github.

Code Quality and Code Encapsulation

There is a deviation between writing lawmaking for your personal or work project and writing code to explicate and teach concepts.

If I was writing this lawmaking for my own project, I would use proper object-oriented principles similar encapsulation, abstraction, polymorphism, etc. But I wanted to make each example stand up lone and easily understood, which meant that some of the code has been copied from one example to the next. I did this on purpose because I didn't want the reader to accept to figure out all the encapsulation and object structures I and so cleverly created. That would take away from the examples.

For the same reason, I chose Not to write these instance with a unit testing framework similar JUnit or TestNG because that'due south not the purpose of this article. That would add another library for the reader to sympathize that has nothing to practice with reading files in Coffee. That's why all the example are written inline inside the master method, without actress methods or classes.

My main purpose is to make the examples equally easy to understand as possible and I believe that having extra unit testing and encapsulation lawmaking volition not aid with this. That doesn't mean that's how I would encourage yous to write your own personal lawmaking. It's just the way I chose to write the examples in this article to make them easier to understand.

Exception Handling

All examples declare whatever checked exceptions in the throwing method declaration.

The purpose of this commodity is to prove all the different means to read from files in Coffee – it'due south not meant to show how to handle exceptions, which volition be very specific to your situation.

Then instead of creating unhelpful endeavour catch blocks that just print exception stack traces and ataxia upwards the lawmaking, all example will declare whatever checked exception in the calling method. This will make the code cleaner and easier to sympathise without sacrificing any functionality.

Future Updates

Equally Java file reading evolves, I will be updating this commodity with whatsoever required changes.

File Reading Methods

I organized the file reading methods into three groups:

- Classic I/O classes that have been part of Java since earlier JDK 1.7. This includes the java.io and coffee.util packages.

- New Coffee I/O classes that have been function of Java since JDK1.seven. This covers the java.nio.file.Files class.

- Third political party I/O classes from the Apache Commons and Google Guava projects.

Classic I/O – Reading Text

1a) FileReader – Default Encoding

FileReader reads in i character at a fourth dimension, without whatsoever buffering. It's meant for reading text files. It uses the default character encoding on your organization, so I accept provided examples for both the default instance, equally well as specifying the encoding explicitly.

1

ii

3

4

5

half-dozen

7

8

9

10

11

12

13

14

xv

xvi

17

18

nineteen

import java.io.FileReader ;

import java.io.IOException ;public class ReadFile_FileReader_Read {

public static void main( Cord [ ] pArgs) throws IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;try ( FileReader fileReader = new FileReader (fileName) ) {

int singleCharInt;

char singleChar;

while ( (singleCharInt = fileReader.read ( ) ) != - i ) {

singleChar = ( char ) singleCharInt;//display i character at a time

System.out.print (singleChar) ;

}

}

}

}

1b) FileReader – Explicit Encoding (InputStreamReader)

Information technology'due south actually not possible to set the encoding explicitly on a FileReader so y'all have to use the parent class, InputStreamReader and wrap it around a FileInputStream:

ane

2

iii

4

5

6

seven

8

9

10

11

12

xiii

14

15

16

17

18

19

20

21

22

import coffee.io.FileInputStream ;

import coffee.io.IOException ;

import coffee.io.InputStreamReader ;public class ReadFile_FileReader_Read_Encoding {

public static void principal( Cord [ ] pArgs) throws IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;

FileInputStream fileInputStream = new FileInputStream (fileName) ;//specify UTF-viii encoding explicitly

try ( InputStreamReader inputStreamReader =

new InputStreamReader (fileInputStream, "UTF-8" ) ) {int singleCharInt;

char singleChar;

while ( (singleCharInt = inputStreamReader.read ( ) ) != - ane ) {

singleChar = ( char ) singleCharInt;

System.out.print (singleChar) ; //display one character at a time

}

}

}

}

2a) BufferedReader – Default Encoding

BufferedReader reads an entire line at a fourth dimension, instead of one character at a fourth dimension like FileReader. It's meant for reading text files.

1

2

iii

4

5

6

7

8

9

10

11

12

13

14

15

16

17

import coffee.io.BufferedReader ;

import java.io.FileReader ;

import java.io.IOException ;public form ReadFile_BufferedReader_ReadLine {

public static void main( String [ ] args) throws IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;

FileReader fileReader = new FileReader (fileName) ;try ( BufferedReader bufferedReader = new BufferedReader (fileReader) ) {

String line;

while ( (line = bufferedReader.readLine ( ) ) != naught ) {

System.out.println (line) ;

}

}

}

}

2b) BufferedReader – Explicit Encoding

In a similar way to how we ready encoding explicitly for FileReader, nosotros need to create FileInputStream, wrap information technology inside InputStreamReader with an explicit encoding and pass that to BufferedReader:

1

ii

three

4

5

6

7

eight

ix

10

11

12

xiii

14

fifteen

xvi

17

xviii

19

20

21

22

import java.io.BufferedReader ;

import java.io.FileInputStream ;

import java.io.IOException ;

import java.io.InputStreamReader ;public course ReadFile_BufferedReader_ReadLine_Encoding {

public static void main( String [ ] args) throws IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;FileInputStream fileInputStream = new FileInputStream (fileName) ;

//specify UTF-8 encoding explicitly

InputStreamReader inputStreamReader = new InputStreamReader (fileInputStream, "UTF-8" ) ;try ( BufferedReader bufferedReader = new BufferedReader (inputStreamReader) ) {

String line;

while ( (line = bufferedReader.readLine ( ) ) != zip ) {

Organization.out.println (line) ;

}

}

}

}

Classic I/O – Reading Bytes

1) FileInputStream

FileInputStream reads in i byte at a time, without any buffering. While it's meant for reading binary files such every bit images or sound files, it tin still be used to read text file. It's similar to reading with FileReader in that you lot're reading one character at a time as an integer and you need to cast that int to a char to come across the ASCII value.

Past default, it uses the default character encoding on your system, then I have provided examples for both the default case, also as specifying the encoding explicitly.

1

two

3

4

5

half dozen

seven

8

9

10

11

12

13

14

15

16

17

18

19

twenty

21

import java.io.File ;

import coffee.io.FileInputStream ;

import java.io.FileNotFoundException ;

import java.io.IOException ;public course ReadFile_FileInputStream_Read {

public static void main( String [ ] pArgs) throws FileNotFoundException, IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;try ( FileInputStream fileInputStream = new FileInputStream (file) ) {

int singleCharInt;

char singleChar;while ( (singleCharInt = fileInputStream.read ( ) ) != - ane ) {

singleChar = ( char ) singleCharInt;

System.out.print (singleChar) ;

}

}

}

}

ii) BufferedInputStream

BufferedInputStream reads a set of bytes all at once into an internal byte array buffer. The buffer size can be set explicitly or use the default, which is what we'll demonstrate in our case. The default buffer size appears to exist 8KB but I have not explicitly verified this. All performance tests used the default buffer size then it will automatically re-size the buffer when it needs to.

one

ii

3

4

five

6

7

eight

9

ten

xi

12

13

14

xv

sixteen

17

18

19

20

21

22

import java.io.BufferedInputStream ;

import coffee.io.File ;

import java.io.FileInputStream ;

import java.io.FileNotFoundException ;

import java.io.IOException ;public course ReadFile_BufferedInputStream_Read {

public static void master( Cord [ ] pArgs) throws FileNotFoundException, IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;

FileInputStream fileInputStream = new FileInputStream (file) ;attempt ( BufferedInputStream bufferedInputStream = new BufferedInputStream (fileInputStream) ) {

int singleCharInt;

char singleChar;

while ( (singleCharInt = bufferedInputStream.read ( ) ) != - ane ) {

singleChar = ( char ) singleCharInt;

System.out.print (singleChar) ;

}

}

}

}

New I/O – Reading Text

1a) Files.readAllLines() – Default Encoding

The Files form is role of the new Java I/O classes introduced in jdk1.7. It only has static utility methods for working with files and directories.

The readAllLines() method that uses the default character encoding was introduced in jdk1.8 so this example will not work in Java 7.

1

2

3

iv

five

vi

7

eight

9

10

11

12

thirteen

fourteen

xv

16

17

import java.io.File ;

import java.io.IOException ;

import java.nio.file.Files ;

import java.util.List ;public class ReadFile_Files_ReadAllLines {

public static void main( String [ ] pArgs) throws IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;List fileLinesList = Files.readAllLines (file.toPath ( ) ) ;

for ( Cord line : fileLinesList) {

System.out.println (line) ;

}

}

}

1b) Files.readAllLines() – Explicit Encoding

1

2

3

iv

5

6

7

8

9

ten

11

12

xiii

14

fifteen

16

17

eighteen

19

import java.io.File ;

import java.io.IOException ;

import java.nio.charset.StandardCharsets ;

import java.nio.file.Files ;

import java.util.List ;public class ReadFile_Files_ReadAllLines_Encoding {

public static void principal( String [ ] pArgs) throws IOException {

Cord fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;//utilise UTF-8 encoding

Listing fileLinesList = Files.readAllLines (file.toPath ( ), StandardCharsets.UTF_8 ) ;for ( String line : fileLinesList) {

Organization.out.println (line) ;

}

}

}

2a) Files.lines() – Default Encoding

This code was tested to work in Java 8 and ix. Coffee 7 didn't run considering of the lack of support for lambda expressions.

i

2

3

iv

5

6

vii

viii

9

10

11

12

13

14

15

16

17

import coffee.io.File ;

import java.io.IOException ;

import coffee.nio.file.Files ;

import java.util.stream.Stream ;public grade ReadFile_Files_Lines {

public static void main( String [ ] pArgs) throws IOException {

Cord fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;try (Stream linesStream = Files.lines (file.toPath ( ) ) ) {

linesStream.forEach (line -> {

System.out.println (line) ;

} ) ;

}

}

}

2b) Files.lines() – Explicit Encoding

Merely like in the previous example, this code was tested and works in Coffee eight and nine but non in Java vii.

ane

2

3

4

5

six

7

8

9

ten

11

12

13

14

15

16

17

18

import java.io.File ;

import coffee.io.IOException ;

import java.nio.charset.StandardCharsets ;

import java.nio.file.Files ;

import java.util.stream.Stream ;public course ReadFile_Files_Lines_Encoding {

public static void main( String [ ] pArgs) throws IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;attempt (Stream linesStream = Files.lines (file.toPath ( ), StandardCharsets.UTF_8 ) ) {

linesStream.forEach (line -> {

System.out.println (line) ;

} ) ;

}

}

}

3a) Scanner – Default Encoding

The Scanner class was introduced in jdk1.7 and tin can exist used to read from files or from the panel (user input).

ane

2

iii

iv

5

6

7

8

9

10

xi

12

13

14

15

sixteen

17

18

19

import coffee.io.File ;

import java.io.FileNotFoundException ;

import java.util.Scanner ;public class ReadFile_Scanner_NextLine {

public static void chief( Cord [ ] pArgs) throws FileNotFoundException {

Cord fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;try (Scanner scanner = new Scanner(file) ) {

Cord line;

boolean hasNextLine = false ;

while (hasNextLine = scanner.hasNextLine ( ) ) {

line = scanner.nextLine ( ) ;

System.out.println (line) ;

}

}

}

}

3b) Scanner – Explicit Encoding

1

ii

3

iv

five

6

7

eight

nine

x

11

12

xiii

14

xv

16

17

eighteen

nineteen

20

import coffee.io.File ;

import java.io.FileNotFoundException ;

import coffee.util.Scanner ;public class ReadFile_Scanner_NextLine_Encoding {

public static void principal( String [ ] pArgs) throws FileNotFoundException {

String fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;//use UTF-viii encoding

endeavour (Scanner scanner = new Scanner(file, "UTF-8" ) ) {

String line;

boolean hasNextLine = false ;

while (hasNextLine = scanner.hasNextLine ( ) ) {

line = scanner.nextLine ( ) ;

System.out.println (line) ;

}

}

}

}

New I/O – Reading Bytes

Files.readAllBytes()

Even though the documentation for this method states that "information technology is not intended for reading in large files" I constitute this to be the absolute all-time performing file reading method, even on files as large as 1GB.

1

2

3

four

v

half dozen

7

8

9

10

11

12

13

14

15

16

17

import java.io.File ;

import coffee.io.IOException ;

import coffee.nio.file.Files ;public form ReadFile_Files_ReadAllBytes {

public static void principal( Cord [ ] pArgs) throws IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;byte [ ] fileBytes = Files.readAllBytes (file.toPath ( ) ) ;

char singleChar;

for ( byte b : fileBytes) {

singleChar = ( char ) b;

System.out.print (singleChar) ;

}

}

}

third Political party I/O – Reading Text

Commons – FileUtils.readLines()

Apache Commons IO is an open source Coffee library that comes with utility classes for reading and writing text and binary files. I listed it in this article because it can be used instead of the built in Java libraries. The course nosotros're using is FileUtils.

For this article, version 2.6 was used which is compatible with JDK 1.vii+

Note that you need to explicitly specify the encoding and that method for using the default encoding has been deprecated.

ane

2

3

four

5

6

vii

8

9

ten

11

12

13

fourteen

15

16

17

18

import coffee.io.File ;

import java.io.IOException ;

import coffee.util.Listing ;import org.apache.eatables.io.FileUtils ;

public course ReadFile_Commons_FileUtils_ReadLines {

public static void main( String [ ] pArgs) throws IOException {

String fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;List fileLinesList = FileUtils.readLines (file, "UTF-8" ) ;

for ( String line : fileLinesList) {

System.out.println (line) ;

}

}

}

Guava – Files.readLines()

Google Guava is an open source library that comes with utility classes for common tasks like collections handling, cache management, IO operations, string processing.

I listed information technology in this commodity because it can exist used instead of the built in Java libraries and I wanted to compare its performance with the Coffee congenital in libraries.

For this commodity, version 23.0 was used.

I'm not going to examine all the different ways to read files with Guava, since this article is non meant for that. For a more detailed await at all the different ways to read and write files with Guava, have a look at Baeldung's in depth article.

When reading a file, Guava requires that the grapheme encoding be set explicitly, just like Apache Eatables.

Compatibility notation: This code was tested successfully on Java 8 and 9. I couldn't get it to work on Java 7 and kept getting "Unsupported major.pocket-size version 52.0" error. Guava has a separate API doc for Java 7 which uses a slightly different version of the Files.readLine() method. I thought I could become information technology to work but I kept getting that fault.

i

2

3

4

v

6

7

8

ix

ten

11

12

13

14

15

16

17

18

nineteen

import java.io.File ;

import java.io.IOException ;

import java.util.List ;import com.google.common.base of operations.Charsets ;

import com.google.common.io.Files ;public class ReadFile_Guava_Files_ReadLines {

public static void main( String [ ] args) throws IOException {

Cord fileName = "c:\\temp\\sample-10KB.txt" ;

File file = new File (fileName) ;Listing fileLinesList = Files.readLines (file, Charsets.UTF_8 ) ;

for ( String line : fileLinesList) {

System.out.println (line) ;

}

}

}

Performance Testing

Since there are so many ways to read from a file in Java, a natural question is "What file reading method is the best for my situation?" Then I decided to examination each of these methods against each other using sample data files of different sizes and timing the results.

Each code sample from this article displays the contents of the file to a cord and so to the console (Organization.out). However, during the performance tests the System.out line was commented out since it would seriously slow downwardly the functioning of each method.

Each functioning test measures the fourth dimension it takes to read in the file – line by line, grapheme by grapheme, or byte past byte without displaying anything to the console. I ran each test five-ten times and took the average and then as not to let any outliers influence each examination. I also ran the default encoding version of each file reading method – i.e. I didn't specify the encoding explicitly.

Dev Setup

The dev surround used for these tests:

- Intel Core i7-3615 QM @2.3 GHz, 8GB RAM

- Windows eight x64

- Eclipse IDE for Coffee Developers, Oxygen.ii Release (4.vii.2)

- Coffee SE 9 (jdk-nine.0.four)

Data Files

GitHub doesn't allow pushing files larger than 100 MB, so I couldn't find a practical way to store my big examination files to allow others to replicate my tests. And then instead of storing them, I'chiliad providing the tools I used to generate them so you can create examination files that are similar in size to mine. Manifestly they won't exist the same, merely yous'll generate files that are similar in size as I used in my performance tests.

Random String Generator was used to generate sample text and then I simply copy-pasted to create larger versions of the file. When the file started getting as well big to manage inside a text editor, I had to use the command line to merge multiple text files into a larger text file:

re-create *.txt sample-1GB.txt

I created the post-obit 7 data file sizes to exam each file reading method across a range of file sizes:

- 1KB

- 10KB

- 100KB

- 1MB

- 10MB

- 100MB

- 1GB

Operation Summary

There were some surprises and some expected results from the functioning tests.

As expected, the worst performers were the methods that read in a file graphic symbol by character or byte by byte. But what surprised me was that the native Java IO libraries outperformed both 3rd party libraries – Apache Commons IO and Google Guava.

What'due south more – both Google Guava and Apache Commons IO threw a java.lang.OutOfMemoryError when trying to read in the 1 GB exam file. This likewise happened with the Files.readAllLines(Path) method simply the remaining 7 methods were able to read in all exam files, including the 1GB test file.

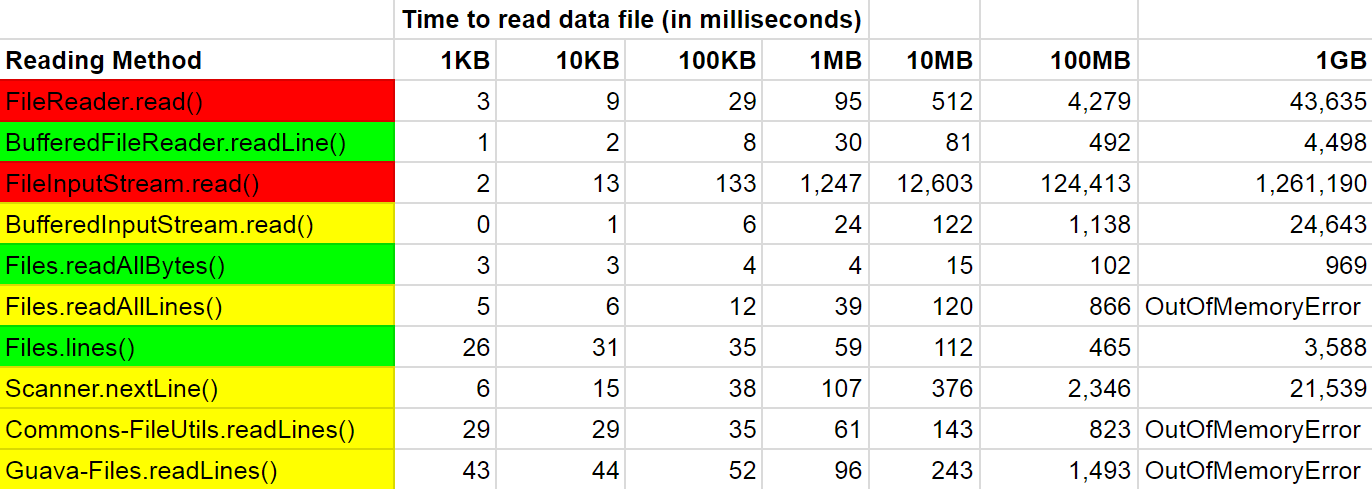

The following tabular array summarizes the average fourth dimension (in milliseconds) each file reading method took to complete. I highlighted the top three methods in green, the average performing methods in yellowish and the worst performing methods in red:

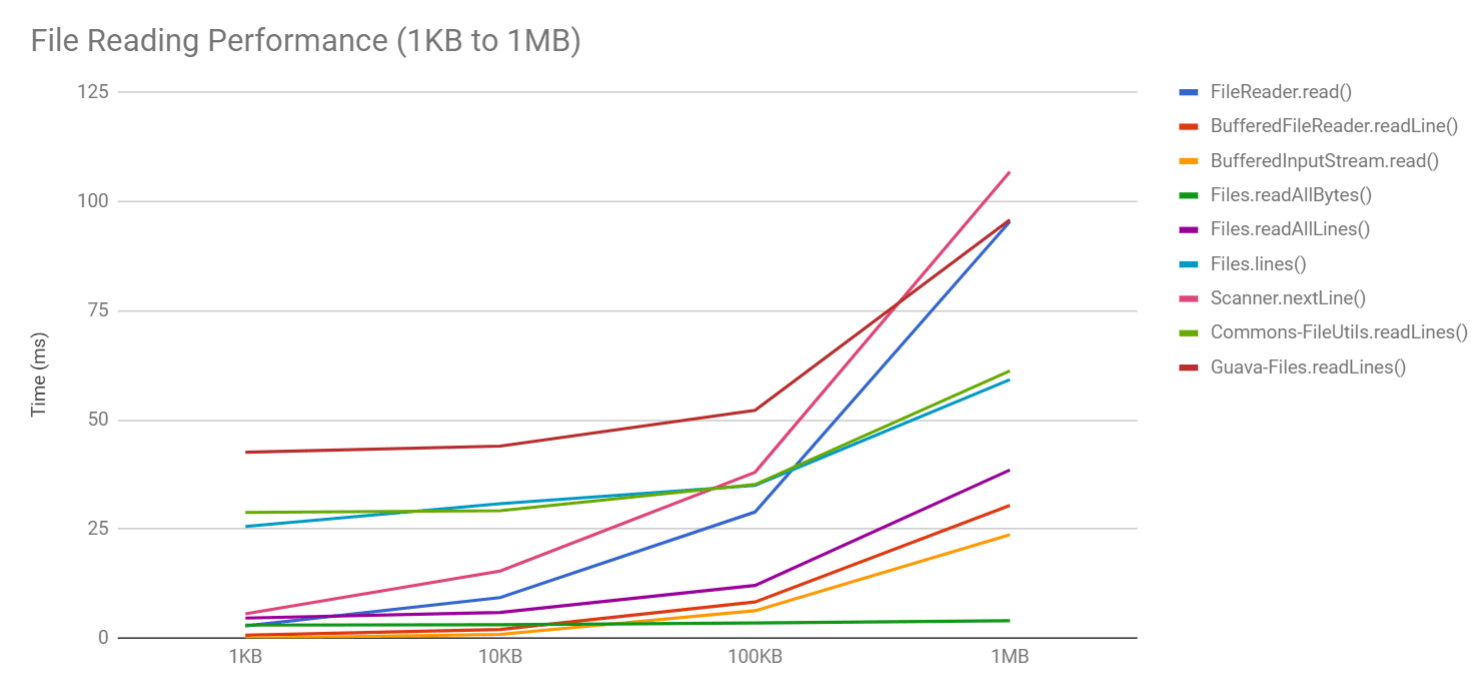

The following chart summarizes the to a higher place table just with the following changes:

I removed coffee.io.FileInputStream.read() from the chart because its performance was so bad it would skew the entire chart and y'all wouldn't see the other lines properly

I summarized the data from 1KB to 1MB because after that, the chart would get too skewed with and then many under performers and also some methods threw a java.lang.OutOfMemoryError at 1GB

The Winners

The new Coffee I/O libraries (java.nio) had the best overall winner (coffee.nio.Files.readAllBytes()) but it was followed closely backside by BufferedReader.readLine() which was as well a proven top performer across the board. The other first-class performer was java.nio.Files.lines(Path) which had slightly worse numbers for smaller test files but actually excelled with the larger test files.

The absolute fastest file reader across all data tests was coffee.nio.Files.readAllBytes(Path). Information technology was consistently the fastest and even reading a 1GB file only took about ane second.

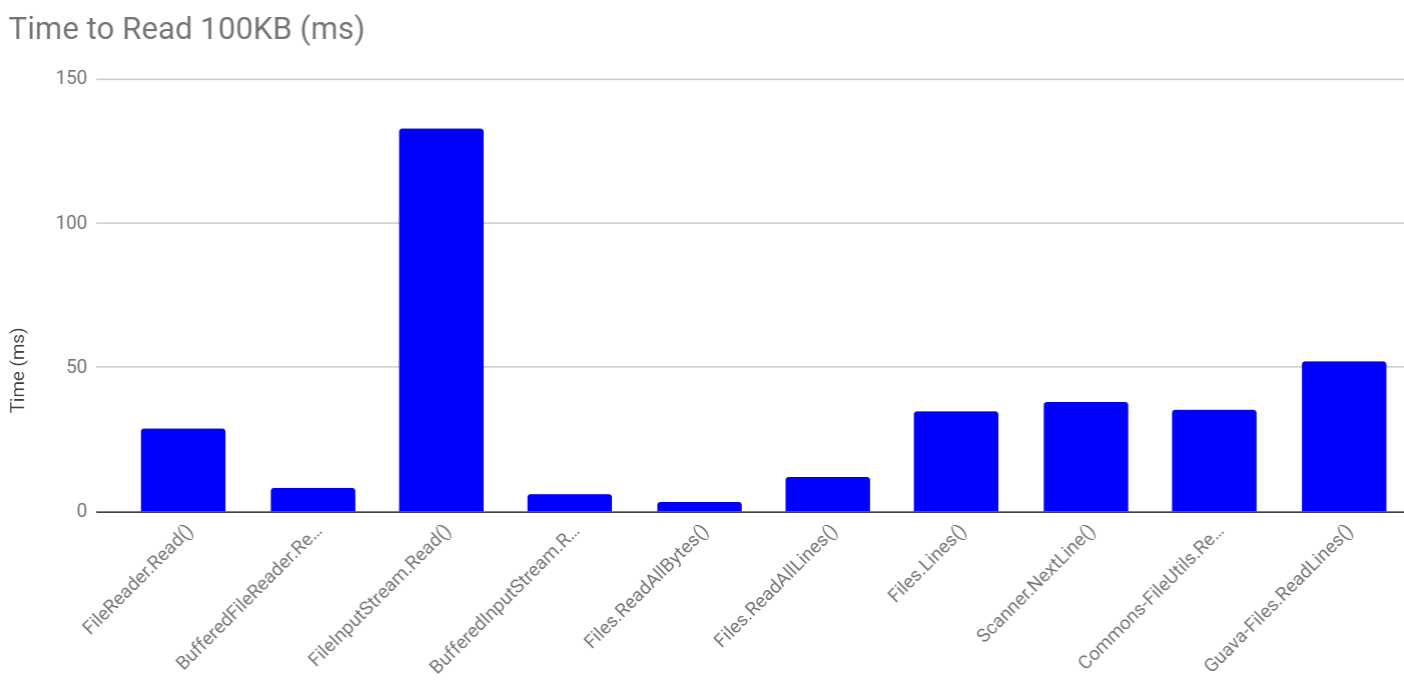

The following chart compares performance for a 100KB test file:

You lot tin can see that the lowest times were for Files.readAllBytes(), BufferedInputStream.read() and BufferedReader.readLine().

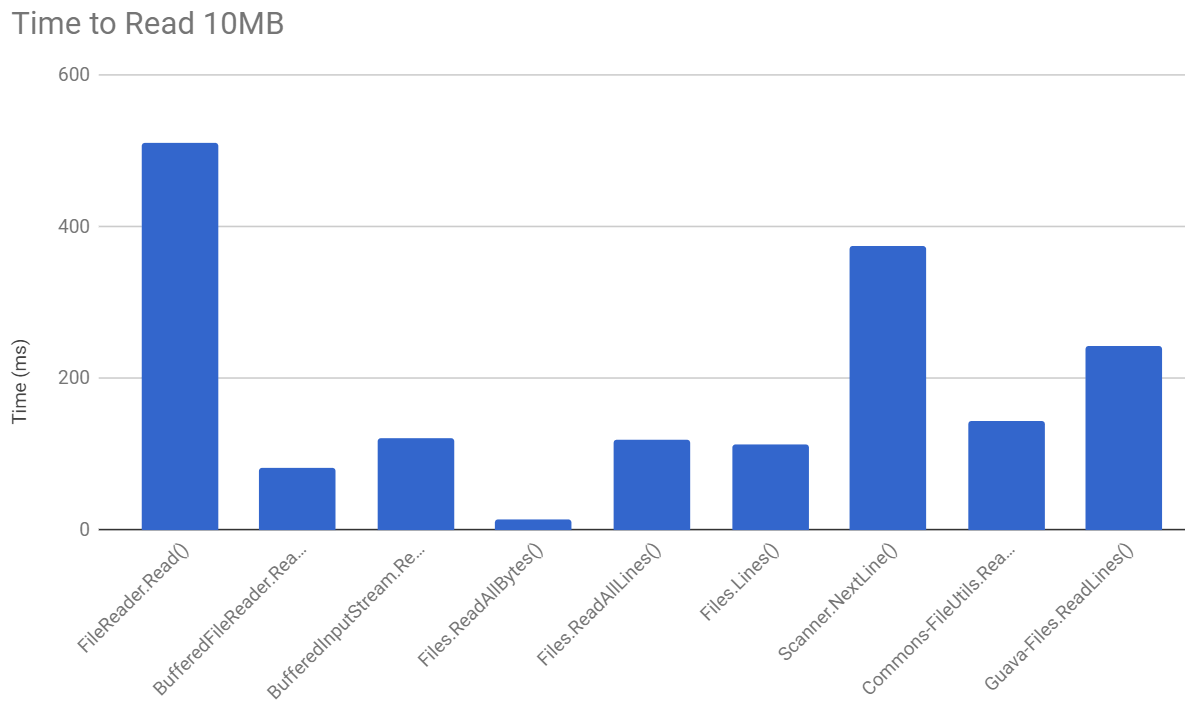

The following chart compares performance for reading a 10MB file. I didn't bother including the bar for FileInputStream.Read() because the performance was so bad information technology would skew the entire chart and y'all couldn't tell how the other methods performed relative to each other:

Files.readAllBytes() really outperforms all other methods and BufferedReader.readLine() is a afar second.

The Losers

As expected, the absolute worst performer was java.io.FileInputStream.read() which was orders of magnitude slower than its rivals for most tests. FileReader.read() was also a poor performer for the aforementioned reason – reading files byte by byte (or character by character) instead of with buffers drastically degrades functioning.

Both the Apache Commons IO FileUtils.readLines() and Guava Files.readLines() crashed with an OutOfMemoryError when trying to read the 1GB test file and they were near average in functioning for the remaining examination files.

java.nio.Files.readAllLines() too crashed when trying to read the 1GB examination file simply it performed quite well for smaller file sizes.

Performance Rankings

Here's a ranked list of how well each file reading method did, in terms of speed and treatment of large files, also as compatibility with different Coffee versions.

| Rank | File Reading Method |

|---|---|

| 1 | java.nio.file.Files.readAllBytes() |

| 2 | java.io.BufferedFileReader.readLine() |

| 3 | coffee.nio.file.Files.lines() |

| 4 | java.io.BufferedInputStream.read() |

| v | java.util.Scanner.nextLine() |

| 6 | java.nio.file.Files.readAllLines() |

| 7 | org.apache.eatables.io.FileUtils.readLines() |

| 8 | com.google.mutual.io.Files.readLines() |

| 9 | coffee.io.FileReader.read() |

| 10 | coffee.io.FileInputStream.Read() |

Conclusion

I tried to present a comprehensive fix of methods for reading files in Java, both text and binary. We looked at xv different ways of reading files in Java and nosotros ran performance tests to run into which methods are the fastest.

The new Java IO library (java.nio) proved to be a great performer but and then was the classic BufferedReader.

Source: https://funnelgarden.com/java_read_file/

0 Response to "How to Read a Txt File of Char to an Array in Java"

Post a Comment